THE HACKER NEWS

levkk

3 hours ago

PgDog is funded and coming to a database near you

PgDog is a connection pooler, load balancer, and sharding proxy for PostgreSQL. Scale Postgres horizontally without rewriting your application....

2 DAYS AGO

NIELZ_R

Reviving Papers with Code

2 HOURS AGO

SYLLOGISTIC

The iPad was on Tailscale: a WebRTC debugging story

20 HOURS AGO

PLASMA

Upcoming breaking changes for npm v12

3 DAYS AGO

BREVE

Magnetoelectric antennas could transform how underwater robots talk

16 HOURS AGO

AHLCVA

German ruling declares Google liable for false answers in AI Overviews

19 HOURS AGO

APIKE

Surprise, pay $1000

A DAY AGO

SWOLPERS

What it feels like to work with Mythos

8 HOURS AGO

GRZRACZ

Show HN: macOS menu bar gauges for your Claude Code quota

4 DAYS AGO

TERNAUS

OpenCV 5 Is Here: The Biggest Leap in Years for Computer Vision

3 HOURS AGO

JOE_COOL

Linux latency measurements and compositor tuning [KWin Wayland]

4 DAYS AGO

1659447091

The oldest surviving animated feature film at 100

3 HOURS AGO

JOE_COOL

Linux latency measurements and compositor tuning [KWin Wayland]

4 DAYS AGO

ZDW

More Molly Guards

edent

5 hours ago

Building an HTML-first site doubled our users overnight

My client was a utility company, and they had a big problem...

thenewedrock

an hour ago

Postgres by Example

Yet another postgresql book! Contribute to boringcollege/postgres-by-example development by creating an account on GitHub....

febin

2 days ago

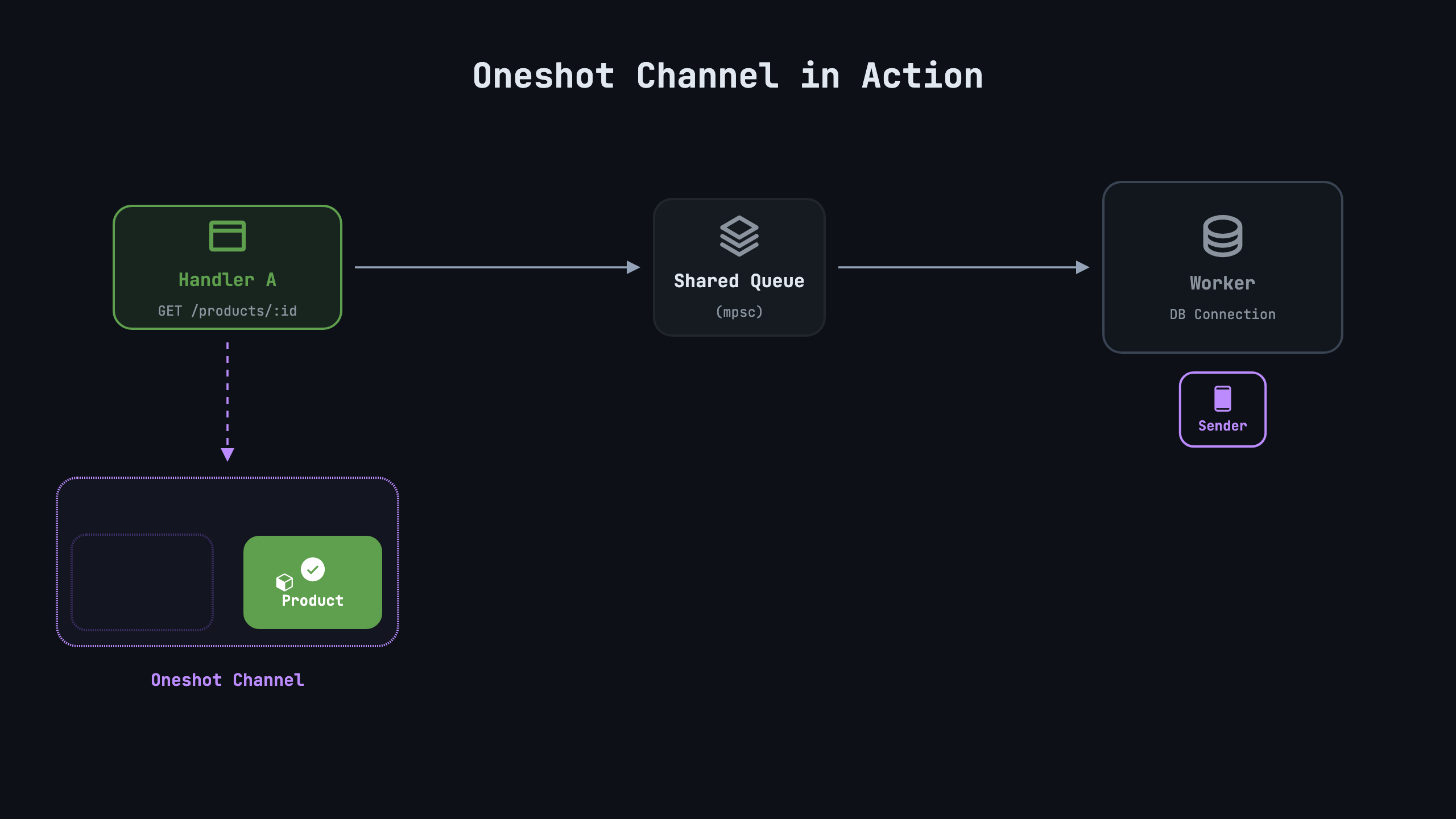

Who Runs Your Rust Future? Hands-On Intro to Async Rust

Ever wonder what actually runs your async code in Rust? Build it yourself: the smallest engine that runs an async job to completion, and learn how futures and runtimes really work along the ...

NaOH

2 days ago

'They take you out of life, out of time': a journey into Spain's cave paintings

The long read: For tens of thousands of years, these Palaeolithic artworks were unseen. When they were rediscovered, onlookers marvelled at their vivid beauty. One of the world’s leading exp...

timsneath

17 hours ago

macOS Container Machines

A tool for creating and running Linux containers using lightweight virtual machines on a Mac. It is written in Swift, and optimized for Apple silicon. - apple/container...

meetpateltech

an hour ago

DiffusionGemma: 4x Faster Text Generation

An overview of DiffusionGemma, an exceptionally fast text generation model with up to 4x faster speeds....

jimsojim

26 minutes ago

Textbooks Should Be Free

Abstract: This article is a short (well, not that short) summary of our experiences in writing a free online text book known as Operating S......

raffael_de

10 hours ago

Mercedes‑Benz starts large‑scale production of electric axial flux motor

Exklusive Einblicke und individuelle Angebote: Erleben Sie mit Mercedes-Benz das Maximum aus digitaler Live-PR. Exclusive insights and individual offers: Experience the maximum of digital li...

https://media.mercedes-benz.com/en/article/bebac2af-acdc-465a-9538-adb0bf3d8ccf

https://media.mercedes-benz.com/en/article/bebac2af-acdc-465a-9538-adb0bf3d8ccf

Philpax

a day ago

Claude Fable 5

Today we’re launching Claude Fable 5: a Mythos-class model that we’ve made safe for general use....

sblank

2 days ago

Hacking for Defense Stanford 2026 – Lessons Learned Presentations

We just wrapped up our Hacking for Defense class at Stanford. This was the 11th year we’ve taught Hacking for Defense, and the impact of asymmetric warfare, (drones, off-the-shelf technologi...

speckx

4 hours ago

I Hate (Most) Keyboard 'Fn' Keys

Sometimes keyboards come with a 'Fn' key that allows some of their context-sensitive keys to do particular general-purpose functions. Sometimes these are implemented in careful, considerate ...

d3Xt3r

12 hours ago

Chrome is looking to permanently drop MV2 extension

Google Chrome is looking to finish off the various bypasses that help uBlock Origin and other such MV2 extensions to keep functioning. Edge and Opera could soon follow too....

ozcap

19 hours ago

RIP software hackathons. Long live the hardware hackathon

Oscar's thoughts too large for a tweet...

yimby

15 hours ago

Rich Sutton on AI creativity and discovery

A new and possibly controversial perspective:

In this video, I explain the sense in which generative AI trained by supervised learning is incapable of making novel discoveries.

https://t.co/...

PaulHoule

3 days ago

How do you design a $30k electric pickup? Inside Ford's skunkworks

We tour Ford’s top-secret Electric Vehicle Development Center in California....

Brajeshwar

an hour ago

BYD to install 5-minute EV chargers across Europe

It wants to build 3,000 chargers across the continent by 2027....

5:20:31 PM WEDNESDAY JUNE 10, 2026

5:20:31 PM WEDNESDAY JUNE 10, 2026